It’s not just nuclear war any more. Recently, science fiction has been discussing a number of threats that have the potential to wipe out a large chunk of humanity–weaponized genetically-engineered diseases, lack of biodiversity in food sources, the effects of global warming, and out of control nanotechnology. One of the most interesting threats to me is the one that is the most cliched science-fiction trope, but actually seems like a real possibility in the next fifty years, the singularity.

What’s a singularity?

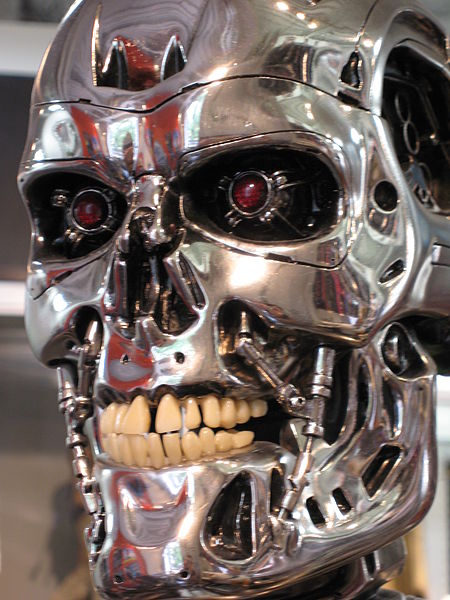

The singularity is the birth of the sentient computer, portrayed in The Terminator, The Matrix, Transcendence, Her, even Philip K. Dick’s “Second Variety”–basically any story where a computer becomes sentient and starts killing people. While most of these stories focus on the moment the computer becomes sentient, that won’t be the key factor that results in the singularity. Rather the key factor is the ability to the machine to improve itself. Once these machines can self-improve and dream up ideas that people can’t, the growth in the machine’s abilities could be exponential, allowing it to quickly eclipse humanity.

Why now?

The singularity is interesting now because of the degree to which the world is interconnected and because of cloud computional models. The interconnected bit is obvious–almost every first world person and corporation is connected to the Internet, and, with the rise of smart homes, soon most consumer electronics will be as well. Of course, they all have security, but hackers are already exploiting flaws to break into systems many systems. It’s reasonable to believe that a computer much smarter than any hacker would have little problems hacking these systems.

The cloud computational models are noteworthy because they provide a simple path to exponential growth in processing power. One of the common design models for processing data in the cloud is to take a big problem, divide it up in to smaller problems, and run each of the smaller problems on its own computer. You grab the computer from a pile of identical machines when you need it, and release it when you’re done. Thus, we’re already putting in place the infrastructure for expanding processor power as needed and the ability for computers to decide when they need more processing power. This is still a long way away from a singularity, but it’s a step on the path.

What can be done?

Asimov suggest the Three Laws of Robotics in his novels:

1.A robot may not injure a human being or, through inaction, allow a human being to come to harm.

2.A robot must obey the orders given to it by human beings, except where such orders would conflict with the First Law.

3.A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

While this is a good start, it’s unclear how to make these laws happen in real life. Asimov implies the Laws of Robotics are inherent in the manufacture of the robot’s positronic brain, but that’s basically a hand-wavy description without any real substance. Creating such rules for a true singularity seems challenging, particularly since the constraints would have to be robust enough to restrain a being significantly smarter and more capable than the humans who created the constraints.

To me, it feels like the safest option is to not use constraints at all, because it’s normal to look for ways around constraints. Instead, my hand-wavy suggestion is to figure out a way to make the machine not want to be a jerk, so that it focuses its abilities constructively rather than on overcoming restrictions in its programming.

The bottom line

This seems like one of those types of problems like global warming and genetically-engineered diseases that people aren’t good at solving. People tend to not care about a threat until they’ve seen it happen once, and it’s way more sexy creating a sentient computer than trying to restrain a sentient computer. But unfortunately, the singularity also has the tipping point property–you only have to fail once. After an out of control singularity happens, it’s likely too late to say, “Oh, so the singularity is a threat. We should do something about that….” There’s no way to put it all back into Pandora’s box.

To make matters worse, even if the vast majority of the world is responsible about the creation and management of singularities, it seems likely that in time, creating a singularity won’t be a huge effort requiring the most brilliant minds in the world. Instead, perhaps a single person or several people working together will be able to create one. So then to avoid a singularity, you’re relying on the intelligence, sanity, and goodwill of everyone on earth.

Good luck with that.

Recall considering this possibility many years ago when Asimov was formulating his laws. Interesting to see the issue recirculate.

LikeLike

Yes. I think it’s hitting the headlines again because it’s becoming close to real, and tech visionaries like Elon Musk are starting to speak about it.

http://mashable.com/2014/11/17/elon-musk-singularity/

LikeLike